The life of an IT professional is fraught with danger and angst, not to mention a near constant suppression of the urge to yell ‘It doesn’t work like that’ whenever tech is represented in the non-tech media. Mostly these are harmless frustrations, like Jeff Goldblum being able to connect his Powerbook 5300 to an alien spaceship, when us regular humans couldn’t connect the damn thing to a printer.

But when the problems are not fictional, they become more serious. On Monday 5th October, we discovered that a ‘computer glitch’ had caused nearly sixteen thousand COVID-19 cases to be unreported by Public Health England. But while a ‘computer glitch’ makes one think of poorly written code, solar radiation bit-flipping and the like, this wasn’t a ‘computer glitch’ at all.

It was a human glitch. I spend a lot of time working with organisations on IT, with an approach that takes into account people, process and technology. All three are vital factors in the successful use of IT, and more often than not, failures are the result of people or process, rather than the underlying hardware and software.

What happened?

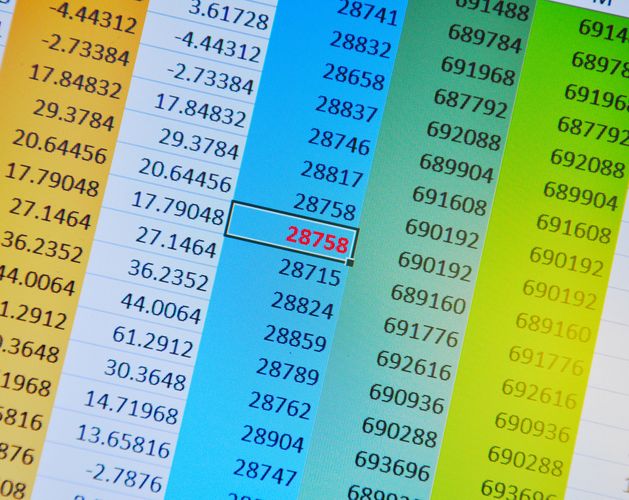

In a nutshell, here’s what happened: Public Health England needed to compile the testing data being submitted by their commercial partners, and then share this with NHS groups, government and centralised Test and Trace systems. They built a workflow to do this, except while the partners were submitting their data in .CSV, the workflow was transforming them into .XLS. To a layman, these are both ‘Excel’, and to many people opening a .CSV, .XLS or .XLSX formatted file, they won’t notice the difference as they open in the same application. But crucially, they are not the same. The .XLS formatted workbooks had far smaller capacity limitations, so larger datasets were truncated when being transformed, resulting in tests not being submitted.

Now, this is a relatively common and simple failing, but one that, given the magnitude and severity of what is being dealt with, should not have been allowed to happen. Yes, Microsoft Excel CAN do everything, but that doesn’t mean you should use it for everything.

It is easy enough to imagine how this system came about, and in the face of an unprecedented pandemic, a bias for action in the public health space is no bad thing to have. But as we all know, a stopgap solution can quickly become the long-term solution, as people move on to other projects and we all say ‘well it works’ right up until the moment it no longer does.

So what lessons can we learn, or at least reaffirm, when presented with this case?

Firstly, there is the importance of strong processes and process design. These processes must be well documented, well understood, and make sense. If possible, these processes should be adaptable to enable re-use. By establishing strong processes and process-driven behaviours in normal operations, in times of crisis, these processes can be used to help. Because while the COVID-19 test and trace system is new and without precedent, the need to manage, transform and share data from and to third parties is nothing new, in healthcare or anywhere else.

If you need to use a stopgap or interim system, you need to have a plan to replace it. Temporary fixes must be just that, because a quick fix usually means skipping some elements of the design, assurance, testing, or other elements that are in place to ensure robust solutions. If you release something that you know is a house of cards (and using Excel to handle test and trace data is clearly a house of cards), you must pay off your technical debt and replace the system with something more robust BEFORE the house of cards tumbles.

You also need broad technical oversight, and diversity of knowledge, background and experience in your technical teams. Diversity enables greater breadth in evaluating a system or project, and makes it more likely that critical issues will be noticed and resolved before they become failures. You need to back this up with a strong culture that enables people to openly speak up when they notice issues, and report failures in the knowledge that they will not be victimised for doing so, and that their concerns will be taken seriously, not swept under the carpet.

Finally, you need platforms. Building a new ‘thing’ every time the mission changes is wasteful, time consuming, inflexible, and borderline insane. When it comes to repeatable tasks, such as the integration of third party data into the organisation, there should be well established, robust processes and standards in place. Not only a set system of how the solution works, and the restrictions, but also information to send to suppliers, public facing documentation, and user guides.

These platforms should take into account people, process and technology, and make standards clear and explicit. By taking in to account a broad range of factors, and establishing correct baselines, you ensure strong standards and reduce the chances of failure and rework. Oh, and always remember, Excel is not a database.